|

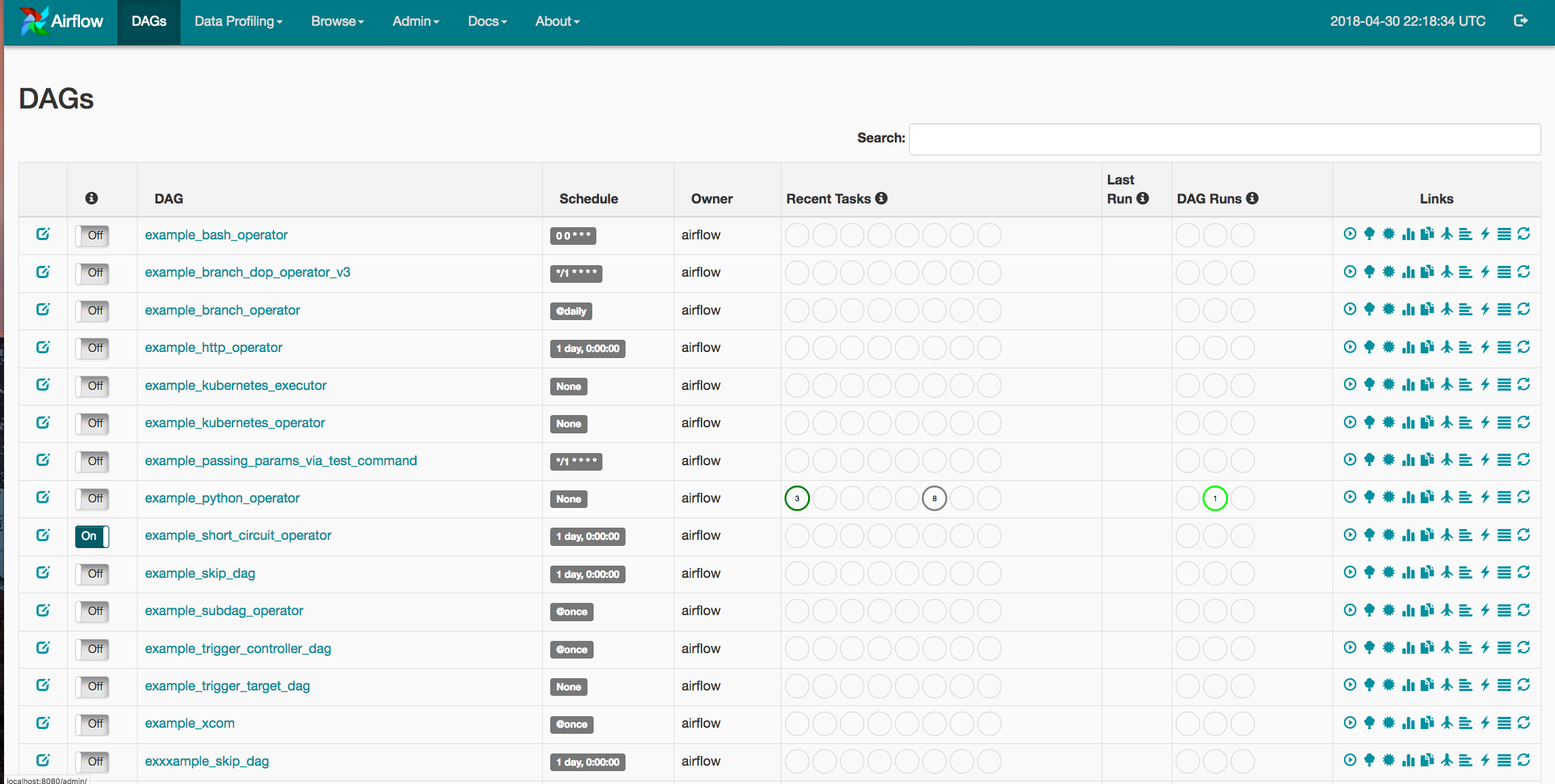

And let's say we have two different tasks, first one is lightweight, it only reads data from an external database and reports them via email. We can easily imagine a situation where each worker is allowed to run concurrently some number of tasks, let's say 2. As great as it is, there is one main drawback – it scales based on the number of tasks, not on the resources needed to run them. We have seen how we can use KEDA to drive auto-scaling of workers based on the number of tasks. Just keep in mind that with KEDA, persistence on your workers needs to be disabled and it supports only CeleryExecutor and CeleryKubernetesExecutor executors. # CeleryExecutor or CeleryKubernetesExecutor required. With Airflow Helm chart, enabling KEDA is pretty simple, all you need to do is configure a few variable in the values.yaml: workers: Of course you can also set a limit to a maximum number of workers running at a time, so it won't drain your resources. Simply put, KEDA will query the database every n seconds for the number of tasks in a state RUNNING or QUEUED and if the number exceeds maximum free tasks slots, it will spawn new workers. With new Airflow and CeleryExecutor, you can utilize KEDA to handle auto-scaling of workers according to the number of tasks in the queue. That means that some workers are running without processing anything and so you are paying for nothing! This would be fine, as long as you don't mind unnecessary long waiting times, the main downside is when only a small number of tasks are scheduled or none at all. If too many tasks are scheduled, they would just wait until they are finally processed. In the simplest deployment, we could spawn a fixed number of workers with fixed number of tasks allowed to be run in parallel on each of them. You are able to set a limit of maximal tasks running on one worker with the environment variable AIRFLOW_CELERY_WORKER_CONCURRENCY. They manage one to many CeleryD processes to execute the desired tasks.

CeleryExecutor and KEDAĬelery is the default executor in Airflow's Helm chart. When you create a task, it's sent to the queue to which workers are listening and can pick up the task to do.Īirflow's executors are the mechanism by which task instances are run.ĭepending on your needs, you can select fromĪs the name suggests, local executors are great for debugging purposes on your localhost, they are not meant for the production usage.įrom the production options, we will look at the Celery and Kubernetes executor along with the Kubernetes pod operator.

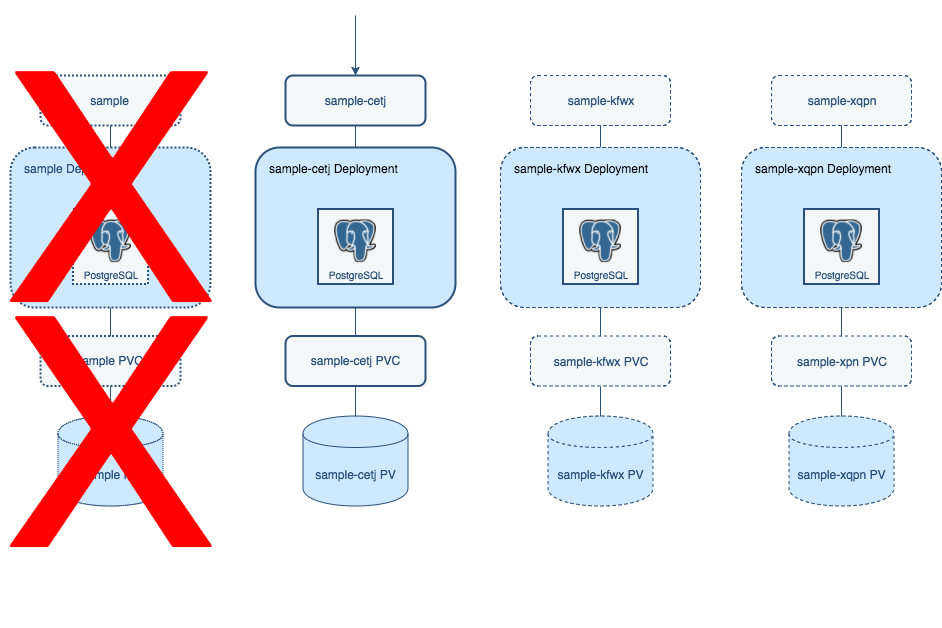

They are daemons that actually execute the logic of tasks. Workers are an essential part of Airflow. In a previous post, we deployed Kubernetes cluster in AWS and Airflow on Kubernetes cluster (EKS) using Fargate nodes. Want to get up and running fast in the cloud? We provide cloud and DevOps consulting to startups and small to medium-sized enterprise.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed